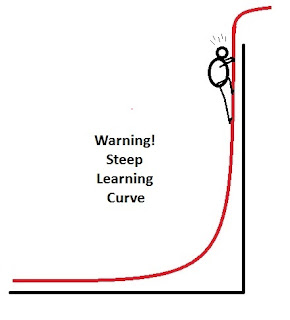

It can be difficult to work your way through hierarchical Bayes choice modeling. There is just too much new to learn. If nothing else, one gets lost in all ways that choice data can be collected and analyzed. Then there is all this output from the estimation procedure that you have probably never seen before. What is this Markov Chain Monte Carlo (MCMC)? What if I don't want to become a Bayesian? How does one get started?

It can be difficult to work your way through hierarchical Bayes choice modeling. There is just too much new to learn. If nothing else, one gets lost in all ways that choice data can be collected and analyzed. Then there is all this output from the estimation procedure that you have probably never seen before. What is this Markov Chain Monte Carlo (MCMC)? What if I don't want to become a Bayesian? How does one get started?

We can reduce the steepness of the curve by learning how to use one function from the R package bayesm to analyze one type of choice data. Hopefully, once you have stepped through a simple example, you will no longer be completely confused when reading introductions and tutorials. My claim is not that you will understand, but that you will stop feeling so overwhelmed.

Your choice: Brand A at some $ versus Brand B at some $ vs. No Thank You

This is a common choice setting. The researcher wants to learn about price sensitivity between two brands in the market. Why not generate a number of choice sets with both brands at varying prices along with the option to refuse to buy either brand. The last option is needed because customers will leave the market if prices are too high. We will keep it simple by using three price points for Brand A and three price points for Brand B and crossing the two pricing factors. That is, we will use a complete factorial design with the 3x3=9 cells obtained from the table with Brand A prices as the rows and Brand B prices as the columns.

Below is what I had in mind. Nine choice sets with three alternatives in each choice set. Price 1 is the lowest price, and Price 3 is the highest price. The price points can be different for the two brands because we will be estimating separate effects for Brand A's and Brand B's prices (called alternative-specific effects). The proportions show the likelihood of a respondent selecting each alternative in each choice set. You should note that Brand A seems to be the preferred brand with higher choice probabilities, except in the Choice Set #7 where Brand A is at its highest price and Brand B is at its lowest price. You should also notice that this is a main-effects design with the likelihood of selecting Brand B remaining the same at each price level of Brand A. That is, the pattern "0.3 - 0.2 - 0.1" is repeated for each of Brand A's price levels. A similar pattern can be seen for Brand A's prices within Brand B's price levels (0.5 - 0.4 - 0.3).

Choice

|

Pricing Level

|

Proportion Selecting

Option

|

|||

Set

|

Brand A

|

Brand B

|

Brand A

|

Brand B

|

Neither

|

1

|

Price 1

|

Price 1

|

0.5

|

0.3

|

0.2

|

2

|

Price 1

|

Price 2

|

0.5

|

0.2

|

0.3

|

3

|

Price 1

|

Price 3

|

0.5

|

0.1

|

0.4

|

4

|

Price 2

|

Price 1

|

0.4

|

0.3

|

0.3

|

5

|

Price 2

|

Price 2

|

0.4

|

0.2

|

0.4

|

6

|

Price 2

|

Price 3

|

0.4

|

0.1

|

0.5

|

7

|

Price 3

|

Price 1

|

0.3

|

0.3

|

0.4

|

8

|

Price 3

|

Price 2

|

0.3

|

0.2

|

0.5

|

9

|

Price 3

|

Price 3

|

0.3

|

0.1

|

0.6

|

Randomly Generating the Data So You Can Step Through the Analysis

Choice modeling is an "as if" statistical model. It is assumed that people behave as if they assigned utility to the attribute levels varied in a choice design. Products are attribute bundles, so the value of the product is a function of the utilities of its component attribute levels. One should be able to look at the proportions in the above table and infer what utilities would have produced such data. That is, Brand A tends to be selected more often than Brand B, therefore Brand A must have a higher utility than Brand B. Similarly, one can observe price sensitivity in the decreasing proportions selecting each brand as that brand's price increases.

My goal was to keep it simple with a 3x3 complete factorial with varying choice set probabilities that would "reveal" the underlying utilities. Here is the R-code to generate 100 respondents making choices from the above nine sets. This code was written to be easy to follow. It creates a NULL object "choice" outside of the loop to store the dataset. The loop repeats 100 times by row binding a new respondent to choice. Data for only 100 respondents were created because I wanted to minimize the run time for the hierarchical Bayes estimation. Each of the nine choice sets is called a cell. For each loop all nine cells are reset to NULL, and a new choice is made using the probabilities from the above table.

# set seed in order to replicate

set.seed(362013)

#create structure for choice data

choice<-NULL

#simulates 100 respondents and 9 choice sets

for (i in 1:100) {

cell1<-NULL

cell2<-NULL

cell3<-NULL

cell4<-NULL

cell5<-NULL

cell6<-NULL

cell7<-NULL

cell8<-NULL

cell9<-NULL

cell1<-rbind(cell1,which.max(rmultinom(1, size = 1, prob = c(0.5,0.3,0.2))))

cell2<-rbind(cell2,which.max(rmultinom(1, size = 1, prob = c(0.5,0.2,0.3))))

cell3<-rbind(cell3,which.max(rmultinom(1, size = 1, prob = c(0.5,0.1,0.4))))

cell4<-rbind(cell4,which.max(rmultinom(1, size = 1, prob = c(0.4,0.3,0.3))))

cell5<-rbind(cell5,which.max(rmultinom(1, size = 1, prob = c(0.4,0.2,0.4))))

cell6<-rbind(cell6,which.max(rmultinom(1, size = 1, prob = c(0.4,0.1,0.5))))

cell7<-rbind(cell7,which.max(rmultinom(1, size = 1, prob = c(0.3,0.3,0.4))))

cell8<-rbind(cell8,which.max(rmultinom(1, size = 1, prob = c(0.3,0.2,0.5))))

cell9<-rbind(cell9,which.max(rmultinom(1, size = 1, prob = c(0.3,0.1,0.6))))

row<-cbind(cell1,cell2,cell3,cell4,cell5,cell6,cell7,cell8,cell9)

choice<-rbind(choice,row)

}

choice

apply(choice,2,table)

choice_df<-data.frame(cbind(1:100,choice))

names(choice_df)<-c("id","set1","set2","set3","set4","set5","set6","set7","set8","set9")

choice_df

I used the R function rmultinom to generate the multinomial choice using the table proportions. The resulting dataset has the following format, showing each respondent as a row and the alternative that was selected in each choice set (1=Brand A, 2=Brand B, and 3=Neither).

id

|

set1

|

set2

|

set3

|

set4

|

set5

|

set6

|

set7

|

set8

|

set9

|

1

|

2

|

2

|

1

|

3

|

1

|

3

|

1

|

3

|

2

|

2

|

1

|

3

|

1

|

1

|

3

|

3

|

1

|

2

|

3

|

3

|

1

|

3

|

3

|

3

|

3

|

1

|

1

|

3

|

1

|

4

|

3

|

2

|

1

|

1

|

3

|

1

|

1

|

3

|

1

|

5

|

1

|

1

|

3

|

1

|

1

|

1

|

1

|

3

|

3

|

One should always begin by looking at the counts for each of the choice sets. Had we not known how these data were generated, we could still observe a pattern. Brand A is selected more often than Brand B. Price sensitivity for Brand A is clear with Price 1 > Price 2 > Price 3. Although there is some price inconsistency for Brand B between Sets 4 and 5, the marginal totals indicate that Brand B was selected 70 times at Price 1 and 60 times at Price 2. Exploratory analysis of the raw counts is always useful, however, it becomes a more difficult task when the design is not balanced (e.g., when attribute levels do not appear equally often or when attributes are correlated).

Option

|

set1

|

set2

|

set3

|

set4

|

set5

|

set6

|

set7

|

set8

|

set9

|

1

|

58

|

51

|

58

|

47

|

39

|

41

|

37

|

35

|

46

|

2

|

26

|

21

|

8

|

15

|

18

|

9

|

29

|

21

|

5

|

3

|

16

|

28

|

34

|

38

|

43

|

50

|

34

|

44

|

49

|

On to the Design Matrices

So far, we have a data frame with the categorical dependent variable called choice. Now we need to attach a design matrix for each of the nine choice sets. It might be easiest to explain by presenting the R code. Design #1 is for the first choice set. There are 6 columns for the 6 parameters to be estimated in this design. That is, there are 2 dfs for the three alternatives, plus 2 dfs for Brand A price and 2 dfs for Brand B price. I have used dummy coding so that the third alternative, Neither, is the base with only 0s. The columns, in order, represent the effects for Brand A, Brand B, Brand A Price 1, Brand A Price 2, Brand B Price 1, and Brand B Price 2. Brand A and B appear in every choice set; only the effects for price vary.

design1<-matrix(c(

1,0,1,0,0,0,

0,1,0,0,1,0,

0,0,0,0,0,0),

nrow=3,ncol=6,byrow=TRUE)

design1

design2<-matrix(c(

1,0,1,0,0,0,

0,1,0,0,0,1,

0,0,0,0,0,0),

nrow=3,ncol=6,byrow=TRUE)

design2

design3<-matrix(c(

1,0,1,0,0,0,

0,1,0,0,0,0,

0,0,0,0,0,0),

nrow=3,ncol=6,byrow=TRUE)

design3

design4<-matrix(c(

1,0,0,1,0,0,

0,1,0,0,1,0,

0,0,0,0,0,0),

nrow=3,ncol=6,byrow=TRUE)

design4

design5<-matrix(c(

1,0,0,1,0,0,

0,1,0,0,0,1,

0,0,0,0,0,0),

nrow=3,ncol=6,byrow=TRUE)

design5

design6<-matrix(c(

1,0,0,1,0,0,

0,1,0,0,0,0,

0,0,0,0,0,0),

nrow=3,ncol=6,byrow=TRUE)

design6

design7<-matrix(c(

1,0,0,0,0,0,

0,1,0,0,1,0,

0,0,0,0,0,0),

nrow=3,ncol=6,byrow=TRUE)

design7

design8<-matrix(c(

1,0,0,0,0,0,

0,1,0,0,0,1,

0,0,0,0,0,0),

nrow=3,ncol=6,byrow=TRUE)

design8

design9<-matrix(c(

1,0,0,0,0,0,

0,1,0,0,0,0,

0,0,0,0,0,0),

nrow=3,ncol=6,byrow=TRUE)

design9

Creating a List Structure for bayesm

We will be using the function rhierMnlRwMixture from the R package bayesm to estimate the parameters of our choice model, and bayesm uses a list structure to store the y (choices), the X (design matrices), and the z (covariates). We will not be discussing covariates in this post, but you should be aware that bayesm offers much greater capability than we will be covering. For instance, the function's name, r-hier-Mnl-Rw-Mixture, suggests a hierarchical multinomial random walk with mixtures of normal distributions. We, however, will be keeping it simple.

The following code will create the list structures that bayesm needs. It is somewhat more general than we need for this example given that every individual sees the same nine choice sets. Briefly, id is used to select only those rows with the same id value. This allows us to have multiple rows for each respondent, as we might if the data were in long rather than wide format. The list will be stored in lgtdata. Then, we loop through each respondent to create a list of 100 with y and X.

id=levels(as.factor(choice_df[,1]))

lgtdata=NULL

for (i in 1:100)

{

respdata=choice_df[choice_df[,1]==id[i],]

ty<-NULL

tdesign<-NULL

ty=c(ty,respdata$set1)

tdesign=rbind(tdesign,design1)

ty=c(ty,respdata$set2)

tdesign=rbind(tdesign,design2)

ty=c(ty,respdata$set3)

tdesign=rbind(tdesign,design3)

ty=c(ty,respdata$set4)

tdesign=rbind(tdesign,design4)

ty=c(ty,respdata$set5)

tdesign=rbind(tdesign,design5)

ty=c(ty,respdata$set6)

tdesign=rbind(tdesign,design6)

ty=c(ty,respdata$set7)

tdesign=rbind(tdesign,design7)

ty=c(ty,respdata$set8)

tdesign=rbind(tdesign,design8)

ty=c(ty,respdata$set9)

tdesign=rbind(tdesign,design9)

lgtdata[[i]]=list(y=ty,X=as.matrix(tdesign))

}

To verify that the code functioned as intended, you need to view the elements of lgtdata. You index the list as lgtdata[[1]] for the first respondent and lgtdata[[100]] for the last respondent. Here are the data for the first respondent. The design matrices have been stacked in $X, and the choices have been placed in the vector $y. Once you tell rhierMnlRwMixture that there are three alternatives per choice set, $X will be subdivided and matched with $y.

$y

[1] 2 2 1 3 1 3 1 3 2

$X

[,1] [,2] [,3] [,4] [,5] [,6]

[1,] 1 0 1 0 0 0

[2,] 0 1 0 0 1 0

[3,] 0 0 0 0 0 0

[4,] 1 0 1 0 0 0

[5,] 0 1 0 0 0 1

[6,] 0 0 0 0 0 0

[7,] 1 0 1 0 0 0

[8,] 0 1 0 0 0 0

[9,] 0 0 0 0 0 0

[10,] 1 0 0 1 0 0

[11,] 0 1 0 0 1 0

[12,] 0 0 0 0 0 0

[13,] 1 0 0 1 0 0

[14,] 0 1 0 0 0 1

[15,] 0 0 0 0 0 0

[16,] 1 0 0 1 0 0

[17,] 0 1 0 0 0 0

[18,] 0 0 0 0 0 0

[19,] 1 0 0 0 0 0

[20,] 0 1 0 0 1 0

[21,] 0 0 0 0 0 0

[22,] 1 0 0 0 0 0

[23,] 0 1 0 0 0 1

[24,] 0 0 0 0 0 0

[25,] 1 0 0 0 0 0

[26,] 0 1 0 0 0 0

[27,] 0 0 0 0 0 0

Finally We Can Run the Analysis

We need to set a couple of values and pass those values along with the data to the function. R=20000 is the number of MCMC draws, and keep=10 is the thinning parameter telling the function to keep every 10th draw.

mcmc=list(R=20000,keep=10)

library(bayesm)

out=rhierMnlRwMixture(Data=list(p=3,lgtdata=lgtdata),Prior=list(ncomp=1),Mcmc=mcmc)

plot(out$loglike,type="l")

trace<-t(apply(out$betadraw,c(2,3),mean))

matplot(trace, type="l")

beta.501_2000<-apply(out$betadraw[,,501:2000], 2, mean)

beta.1001_2000<-apply(out$betadraw[,,1001:2000], 2, mean)

beta.1501_2000<-apply(out$betadraw[,,1501:2000], 2, mean)

cbind(beta.501_2000,beta.1001_2000,beta.1501_2000)

The rhierMnlRwMixture function took about 10 minutes on my PC to complete the 20,000 draws. The coefficients for the parameters in our design matrix are stored in out$betadraw, which is a 100 x 6 x 2000 array. The first two dimensions, the 100 x 6, seem reasonable. If hierarchical Bayes yields individual-level beta weights, then we should have six coefficients for each of the 100 respondents. But we do not have one estimate of each coefficient for each respondent. There were 20,000 draws per respondent from which we kept every 10th for 2000 in total.

I have included a number of lines after rhierMnlRwMixture that require some time to explain because they involve issues about whether the MCMC draws have entered a high-probability region (often called convergence). Appendix A from the book Bayesian Statistics and Marketing reviews these commands when discussing another example. This chapter by Rossi and Allenby also describes this same study. Both readings are challenging, but neither is overly technical.

However, I do want to finish with the last four lines of codes. I want to examine the aggregate level estimates for my six parameters after averaging over respondents. If I discard the first 500 draws, I will average draw 501 to 2000. Thus, the three apply operations discard a different number of initial draws (500, 1000, and 1500). One of the conditions for entering a high-probability region is that the estimates should not fluctuate widely. Let's take a look.

beta.501_2000

|

beta.1001_2000

|

beta.1501_2000

|

|

Brand A

|

-0.08

|

-0.08

|

-0.04

|

Brand B

|

-1.99

|

-2.02

|

-2.01

|

Brand A, Price 1

|

0.76

|

0.74

|

0.69

|

Brand A, Price 2

|

0.11

|

0.09

|

0.05

|

Brand B, Price 1

|

1.39

|

1.43

|

1.43

|

Brand B, Price 2

|

1.16

|

1.19

|

1.18

|

We do not see much fluctuation, but do these coefficients reflect what we knew when we generated the data? Given the dummy coding, the coefficients appear reasonable. The brands are ordered as expected, given that "Neither" was the base alternative and has a coefficient of zero (Neither = Brand A > Brand B). The highest price is Price 3, which is also coded 0, therefore price sensitivity seems to be in the correct direction. But these coefficients do not represent linear effects, and we should be reluctant to reach general conclusions. We need a simulator to access the impact of changing prices for the two brands.

As you might recall, I began this post with the hope that it would help the reader to run their first hierarchical Bayes choice model using R. With that in mind, I tried to stay focused on running the analysis. For those wanting to understand the details, Kenneth Train provides an updated online textbook and some very blurry RealPlayer videos of a 2001 course. The learning curve is still very steep. Perhaps with the ability to run the analysis in R, you will be motivated to start the climb.

mmmm...very nice!

ReplyDeleteNice R foo in matching in the design files to make a usable dataset... it's nice to have a walkthrough of what you need to actually use bayesm. Thanks!

ReplyDeleteThis is super useful !! Thank you so much for explaining it step by step. I had purchased Bayesian Statistics and Marketing but found it very hard to go through it. I still need a much more simplified version for it. Any site you could recommend ?

ReplyDeleteAlso in your last few lines you've talked about not being able to interpret the co-efficients since its not a linear analysis and hence we will need to perform a simulation. Would I be too greedy if I ask you to make a post on it ? Or alternatively if you could give me some pointers?

I am very happy to hear that the post was useful. I am even happier to see that you have started the steep climb. If it is any comfort, you are not alone in finding the BSM book difficult. However, it is actually well-written, which you will discover once you have learned more. I gave the link in the post to the chapter by Rossi and Allenby because it seems more introductory. Some find the technical reports by Sawtooth Software to be more approachable (see their website under CBC/HB).

DeleteThe problem is that hierarchical Bayes choice modeling is hard because it is a hierarchical model, it is Bayesian, and it is choice modeling (i.e., the dependent variable is multinomial). I would recommend that you start with choice modeling. I gave a link in the post to John Fox because he writes well and provides a lot of free material. You need to learn first what is wrong with the linear probability model, then master logistic regression and multinomial models. Next, what is a hierarchical model (also called a multilevel model or a mixed model)? Many recommend Gelman and Hill's book "Data Analysis Using Regression and Multilevel/Hierarchical Models." Finally, Bayesian estimation cannot be treated as a "black box" that you can point-and-click your way through because it is too easy to go astray. You need to know enough about what you are doing so that you are aware of all the ways it can go wrong. You don't need to be a mathematical statistician, but you do need to know more than how to navigate a GUI and what numbers to write down. John Kruschke's book "Doing Bayesian Data Analysis" may be a good place to begin your study. I thought that Simon Jackman's book "Bayesian Analysis for the Social Sciences" was written well, but then I have a social science background. I wrote a review of it on Amazon. I gave links to Kenneth Train's book and video course in the post because his work is cutting edge and because it is not all technical. That is, he discusses the behavioral models that might lead us to use Bayesian estimation or something like maximum simulated likelihood (mlogit r package). I also studied econometrics in graduate school.

I make these personal references because you need to find references in your field. Each treatment has its own viewpoint and jargon. Although I have subdivided the task into three components, you should seek out references in your area of research with examples that you can relate to. Let me what you find. There is an email address under contact.

Great post! Carry on the good job with the blog ;)

ReplyDeleteThank you for this very useful post! I have already used bayesm and I am not sure about one thing: If I want to know, if the coefficients are significant (I know, that "significant" is not really the correct term in bayesian analysis), can I just use the r-command

ReplyDeletet.test(out$betadraw[,,501:2000])?

It depends on how you wish to treat your individual-level estimates. You can decide to remain entirely within the Bayesian framework and use the posterior distributions to compute confidence or highest density intervals. Or, you may treat the estimates as if they were any other subscale score, that is, derived scores from repeated observations of each person (“fancy” sums and differences). Then, you are free to do with the estimates as you would do with any subscale score, including t-tests. You need to be careful about significance tests that assume independence because the “subscale scores” were calculated using a hierarchical or multilevel model, which is why some prefer highest density intervals from the posterior distribution (see John K. Kruschke Doing Bayesian Data Analysis).

DeleteThank you very much.

DeleteI now think, that using the t.test-command is wrong; I only have to calculate the ratio mean/sd of the draws. I explored this by comparing the results of a bayesian analysis of data using bayesm (rmnlIndepMetrop) with a Maximum Likelihood Estimation of the same data. However, I will try to learn more about analysis of the posterior distribution.

Super!....Thank you

ReplyDeleteIm having trouble interpreting your design matrix :c

ReplyDeleteAnyone able to help me understand it?

Ps otherwise i love ur approach and thanks for your effort in sharing.

If you review the last section where I interpret the coefficients and then look back at my description of the design matrix, it ought to be clear. I am assuming that you are familiar with dummy coding categorical variables in regression analysis, which I did not cover in the post but is easy to learn about by searching the web.

DeleteHi, thank you for the blog post. It is very helpful .

ReplyDeleteI tried your approach in R after making my own response and design set in excel and made the list structure. but I am getting this error on running rhierMnlRwMixture, " Error: Requires element X of lgtdata[[ 1 ]] "

Can you please help me undertsnad this error. thanks again

This reminds me of the error messages I received when I started using bayesm. So there seems to be a problem with the independent variables in lgtdata. It is likely that the first element, lgtdata[[1]], generated the error message. When I get such an error message, I first check the lgtdata structure with str( ) or just print out lgtdata[[1]]. It seems that the design in $X was not read correctly. Usually, I have made a typing error. You may need to set i=1 and run the syntax inside the loop in order to see what is going wrong. Remember that X contain tdesign, which results from row binding. You need to reset lgtdata<-NULL each time. Debugging is all trial and error. Good luck.

Deletethanks for your reply. I succeeded in running the code but now i get this error, "Error in chol.default(H) : the leading minor of order 2 is not positive definite".

DeleteI understand that a square matrix is generated on which a metropolis algorithm is executed. for this the square matrix needs to be positive definite. the diagonal element of the square matrix, is given by the "nu" parameter in the "prior" argument.

The dimension of the square matrix is the number of variables. I need to understand how this matrix is related my design matrix and if I need to revisit my design matrix.

sorry to bother you again and thanks for everything !

This is the type of error that one might get when the Choleski decomposition of the t(X)%*%X matrix fails because the design matrix has deficient rank, which means that you should check your design matrix for linear dependencies (e.g., only # levels - 1 degrees of freedom). My guess is that one of your columns in your design matrix is a linear combination of the other columns. At least, this is where I would begin. Let us know what you find.

DeleteHi, thank you for the response. So, I found that decreasing the number of attributes and increasing the number of runs works. I am actually using optfederov in the Algdesign package to get the choice sets and then I convert into a design matrix using Excel.

DeleteSpecifically, number of Attributes = 3, Level =3 each and runs = 9 works. But, if I increase the number of attributes I get the error. How do I ensure that my design matrix has enough rank?

My choice sets are definitely orthogonal, because I ensured that I keep the number of runs according to number of attributes and levels so as to get a orthogonal matrix. But, when I go from there to generating lean design set, thats where the problem occurs. Also, I need to calculate the utilities at every level for every respondent. Thank you again !

Whenever I see your error message, I always recheck the R code to be certain that I am entering the design matrix correctly. AlgDesign yields optimal designs and lets you know if there is a problem, so I doubt that the design itself is the source of your difficulties.

DeleteI do bayesian estimation of the same model in two different experimental groups. To see, if a coefficient is different from 0, I calculate the hpd-Intervalls of the MCMC-Draws, but I also want to compare the same coefficient in the two experimental groups (the Hypotheses is, that the cofficients are different). I have been thinking about using a kind of nonparametric two Sample t-Test to compare the draws of the population mean of the coefficients, e.g. the Man-Whitney-U Test. The problem is, that I can just increase the number of draws in the MCMC and the Test will reach significant values. So the question arises: Is there an appropriate way to compare draws of a coefficents of the same model in two different samples?

ReplyDeleteThank you very much in advance!

The rhierMnlRwMixture function allows you to enter covariates, which is what an economist might call your two experimental groups or just someone thinking of this as an analysis of covariance and testing the interaction of the group covariate with the other independent variables. However, you should review the documentation for this function and be certain that you center your dummy coding for these groups (e.g., an effect coding like -1 and +1 would work if your two groups had the same number of respondents, but be careful for the mean of z must be zero). The example from the rhierMnlRwMixture documentation shows how to center z.

DeleteYour question is whether the same coefficients describe both groups, or whether you need different weights for the two groups. It is the sample sizes and not the number of draws that determines the degrees of freedom. If it helps, you could think of this as running tests for differences on each coefficient from two separate runs on the two experimental groups. That is, one estimates the parameters separately for each experimental group, outputs the coefficients for all the respondents in each group, and performs a statistical test to determine if the coefficients are different. Regardless of the number of draws for each separate group, the number of respondents remains the same. Hopefully, this additional detail will not be confusing.

Thank you for this very helpful suggestions. However, now some more questions arise. In my application, it may be necessary, to hold some coefficients constant across respondents. Therefore, I used “RSGHB” instead of bayesm. However, RSGHB does not allow for covariates, while bayesm does not allow for fixed coefficients. I think, that I would have to add another step (as described by the book of Kenneth Train, Chapter 12) in the function rhierMnlRWMixture, to draw the fixed coefficients in each iteration of the MCMC from the pooled data? I am not sure that I will do everything right, if I try to do this. Do you know a simple method to estimate fixed and random coefficient together?

DeleteThe next question is about the second part of your answer. I also considered to estimate separate models for both groups and test, if the coefficients are different. You write “That is, one estimates the parameters separately for each experimental group, outputs the coefficients for all the respondents in each group, and performs a statistical test to determine if the coefficients are different.” Therefore, if I have groups with n1 and n2 respondents, I calculate the coefficient for each respondent from the draws of the individual coefficients. Then I use a t-test (resp. nonparametric test) to compare the n1 coefficients of group 1 to the n2 coefficients of group 2 (without considering the draws or the variance in the draws any longer)? Did I understand this correctly?

I had already considered to do it this way, but I was not sure, if it is correct, because the individual coefficients are shrinked towards the population mean. Therefore my idea was to compare the draws of the population means of the two groups directly without looking at individual coefficients. Is there a way to do this or does this make no sense?

Question #1: The mlogit package in R will fit the types of models that Train discusses in his book (see the mlogit vignette on running the Kenneth Train exercises).

DeleteQuestion #2: I added the phrase "if it helps, you can think of this as" because I do not advocate this type of post hoc analysis for all the same reasons that hold for multilevel data in general. However, I thought that the comparison might help clarify the issues for those who are not familiar with topic.

Many thanks once again!

DeleteOne can implement covariates in RSGHB by using fixed parameters. For some users, it is not quite as straight-forward as it is in bayesm, but then again RSGHB can be easier to use than bayesm in other ways.

DeleteWe had a poster at the 2015 Advanced Research Techniques forum demonstrating the value of covariates and how to implement them in RSGHB, bayesm, and Sawtooth's CBC HB (a commercial software package). The poster and analysis code can be found here:

https://github.com/jeffkeller87/ART2015_Covariates

Hi Joel,

ReplyDeleteHave you done experimental design and created choice set design in R? I mean something like SAS OPTEX, but in R? Can you shed some light on that please?

SAS seems to offer the most complete off-the-shelf solutions for constructing optimal experimental designs. For a summary of what is available in R, you might read the review of the book “Optimal Experimental Designs with R” in the Journal of Statistical Software (Ulrike Gromping, October 2011). However, is optimal design necessary for hierarchical Bayes estimation? I have run many choice models with random sampling from the complete factorial design (e.g., randomly select a level or a value separately for each attribute and construct the choice set on the fly while keeping track of what was presented and what was selected). Fractional factorial designs were popularized with rating-based conjoint yielding individual regression estimates for each respondent (search “SPSS Conjoint” for examples). Jordan Louviere’s “Analyzing Decision Making” shows in detail how to extend this approach to aggregate-level choice model. Of course, none of this is needed with hierarchical Bayes.

DeleteHi Joel,

DeleteThank you for your kind comment!

I am pretty new to discrete choice model and R. I am doing a dummy project just for the sake of learning and have asked my friends in social media to fill a survey. Can you guide me where i could have done better. I have 4 attributes with 2 level and 2 attributes with 3 level. I created an OMEP in R package AlgDesign OpFederov and got 16 alternatives. I used another R package to create balanced incomplete block design of 80 choice sets. each choice set has 4 alternative. could i have improved anywhere in this process?

Also, i have heard a lot about D-efficiency. how do we interpret this? If you compare this with R square in linear regression.

Regards,

Azim

John Hauser offers an introduction in his post called "Note on Conjoint Analysis" that might help you. It sound as if you have an orthogonal fractional design (see my post A Brief Tip on Generating Fractional Factorial Designs in R). Now, why is this a choice design? Are you modeling choices made in some real context (e.g., four different modes of transportation or four different brands)? If not, why not simply ask for a preference rating? Regrettably, the comment section is not a good place for individualized instruction. However, if you read the Hauser note carefully, you ought to see how these concepts are applied in practice.

DeleteHi Joel,

DeleteYes, i have four different alternatives in real context, which is why i have this design of OMEP for alternative design and BIBD for choice set design.

Hi Joel,

ReplyDeleteI have a code that i use to generate balanced incomplete block design in R. We have value of trt as 14 and k as 4 which are fixed. However we change value of b in the find.bib() function to generate a design and check if that design is balanced incomplete block design using isGYD() function.

bibd <- find.BIB(trt = 14, k = 4, b = 64)

bibd

isGYD(bibd)

The problem is i have to do this multiple times and may be hundreds of times until we get confirmation from isGYD function that this design is a balanced incomplete block design. We do this task manually!

My question is can we automate this with a combination of for loop and If Then condition? my algorithm is, for i =1 to 100, create a block design and then test if that design is balanced incomplete design. If it is, save this design and exit from loop. If it was not balanced incomplete design, continue to next iteration of loop.

# run this 1 to 100 times

for (i in 1:100) {

#create balanced incomplete block design by passing value of i fo b

bibd <- find.BIB(trt = 14, k = 4, b = i)

#check if this design is a balanced incomplete block design

if (isGYD(bibd)) {

#save this design and exit loop

}

#if this iteration didn't give us balanced incomplete block design, take us to next iteration

}

Any help?

Sorry, but this level of detail might be better handled in a setting designed for such issues (e.g., Stack Exchange).

DeleteCan you give some hint on how to do latent class conjoint in R? i tried poLCA package and it turns out that we clusters derived doesn't respect the idea of a 'choice-set'. i.e. different alternatives in a choice set are getting assigned different clusters. Which is strange. Have you done this? Many thanks!

ReplyDeleteGiven the R code above and in the updated post, Let's Do Some More HB Choice Modeling in R, one simply changes the ncomp argument from “=1” to “=the number of latent classes” (e.g., ncomp=3 will yield 3 latent classes). When ncomp=1, we are assuming that individuals can have unique utilities or part-worths or coefficients but these all come from the same multivariate normal. When ncomp>1, the function fits a mixture of multivariate normal components as one would in a finite mixture model (e.g., the mclust R package). You should remember that if you have predefined groups (e.g., region A vs. region B), you add these to the model in Z as covariates that have been centered and without an intercept.

DeleteHi Joel,

ReplyDeleteFirst of all let me confess that you blog is one of the greatest ressources on the internet!

One question regarding the article above: How can I estimate individual-level utitlity values? Or maybe I just missed that point.

Thx for your advice.

Regards

Dirk

The section "Finally We Can Run the Analysis" is where you want to look. bayesm outputs a list and one of the elements is betadraw. I used the apply function to calculate the mean for different numbers of draws. Take a look and let me know if you have any problems.

DeleteHi Joel,

DeleteAh okay, I see.

So I guess I can get those individual-level values with:

U <- apply(out$betadraw[1:100,1:6,],c(1,2),mean)

Right?

Thx so much!

Dirk

Yes, but be careful about the indices. As you show in your R code, betadraw is a cube defined as respondents x coefficients x draws. So, you first decide how many draws to discard. Let's say you compare the coefficients listed in the above post and decide to eliminate the first 500 draws. Now, use apply to average across the last index for draws:

Deleteestimate<-apply(out$betadraw[,,501:2000], c(1,2), mean)

Oh yes, that's right - thank you Joel for this important note!

DeleteJust one last question in this context:

In package bayesm one finds another function called 'rscaleUsage' for analyzing data on scale usage heterogeniety.

Runnig this function on some data I am able to retrieve the adjusted mean values:

Res <- rscaleUsage(...)

Res$mudraw

Is there also a possiblity to retrieve the adjusted scale values for each respondent on each variable? It seems to me that the function does not return those values - but I wondered if I can calculate those values. But I must admit that this exceeds my theoretical knowledge here.

Best regards

Dirk

It has been some time since I looked at the rscaleUsage function, but as I recall, it did not output individual-level estimates. I never pursued the topic because I do not agree that halo effects are measurement error. Uniformly high brand ratings reflect brand equity, and uniformly high importance ratings represent greater product involvement. You will find a number of posts on this blog outlining my views on halo effects.

DeleteHi Joel,

ReplyDeleteThank your for this great post and good illustration.

I am an absolute beginner and after days of reading I am still trying to understand how the estimation procedure actually works (what is done by the function rhierMnlRwMixture (or others in bayesm package)?).

I know that for parametric models I need to prespecify a prior distribution on the coefficients and a prior on the parameters of the coefficients and additionally a likelihood function. Did I get it right that then the posterior distribution is derived analytically and finally draws are taken from that posterior?

Can you please help me to understand the process?

My post was meant only to show how one might use R to analyze hierarchical choice data. I avoided the details because they are just far too complicated for a blog. You might take a look at Justin Esarey at Rice University. He provides video and R script for a course in Bayesian Statistics (http://jee3.web.rice.edu/teaching.htm) that covers hierarchical models.

DeleteHi,

ReplyDeletei have a question. How have i to construct the design matrix if i have 5 features with different levels. For example Feature one has five levels and so on. Needs $X then five columns for the level price or habe i just one column with numbers one to five for the levels. So if i would be right, then should i have for $X round abound 25 Columns or? And if i plot the loglike i miss the typical Burn in in the plot. I have just a fluctuation around a constant. Is this normal? I constructed my Designplan with XLstat for Excel.

Thank you for your Feedback.

In choice models, where there are only a small number of price levels, it makes sense to treat price as a categorical factor (e.g., one requires only 2 dummy variables to code 3 levels of price as shown above). Of course, price can be treated as a continuous variable. In fact, you can randomly generate price as a uniform variable over a specified range and then fit a polynomial function or use a log transformation.

DeleteHi,

Deletethank your for your Feedback i will show you what i've done to be sure to be right.

I have 5 Price Levels (-10%,-5%,0,+5%,+10%), 2 Levels for Price Communication (Yes/No), 4 Levels for Questions, 3 Levels for Additinal Benefits and 2 Levels for Sponsoring (Yes/No). I choosed as reference level the actual product so i can show the improvements.

I will construct the design-matrix like this.

design1 <- matrix(c(

0,1,0,0,1,0,1,0,0,0,1,

0,1,0,0,0,1,0,0,0,1,0,

0,0,0,0,1,0,1,0,0,1,0,

0,0,0,0,0,0,0,0,0,0,0)

,nrow=4,ncol=11,byrow = TRUE)

Is this correct or sensful? You safed my life with this post here.

Thank you.

I am assuming that design1 describes a choice set that a respondent would be shown. Then, the number of rows would indicate three alternatives were shown with the last row representing "none of these" (e.g., in my example, the first row was Brand A and the second row was Brand B where A and B stand for different brands such as R and Python or BMW and VW). Where are your columns for these three alternatives?

DeleteHi,

Deletewhat do you mean with, "Where are your columns for these three alternatives?". Which three alternatives, each row is a alternative.

Sincerly,

Alex

I have copied the relevant section from the above post here:

DeleteOn to the Design Matrices

So far, we have a data frame with the categorical dependent variable called choice. Now we need to attach a design matrix for each of the nine choice sets. It might be easiest to explain by presenting the R code. Design #1 is for the first choice set. There are 6 columns for the 6 parameters to be estimated in this design. That is, there are 2 dfs for the three alternatives, plus 2 dfs for Brand A price and 2 dfs for Brand B price. I have used dummy coding so that the third alternative, Neither, is the base with only 0s. The columns, in order, represent the effects for Brand A, Brand B, Brand A Price 1, Brand A Price 2, Brand B Price 1, and Brand B Price 2. Brand A and B appear in every choice set; only the effects for price vary.

I added now three columns.

ReplyDeletedesign1 <- matrix(c(

1,0,0,0,1,0,0,1,0,1,0,0,0,1,

0,1,0,0,1,0,0,0,1,0,0,0,1,0,

0,0,1,0,0,0,0,1,0,1,0,0,1,0,

0,0,0,0,0,0,0,0,0,0,0,0,0,0)

,nrow=4,ncol=14,byrow = TRUE)

I thought your first two columns are for the Brand A and Brand B. But i see what you mean. Cause my None-Option is identical with my reference. So i have to adapt my designmatrix to make the difference clear between the None-Option and my reference product.

So i could three columns for the alternatives. Which could lead to problems with interpretation. Or i could codify some category without an reference niveau, or?

Or would effect-coding be more useful?

DeleteYou are free to use whatever coding you prefer.

DeleteIs there in the bayesm Package an Function to say something about the Goodness of Fit? Coulnd't find something in the documentation. And the importance of the relativ importance of the features, or can i use the span like in the TCA?

ReplyDeleteThank you.

Both concepts, goodness-of-fit and relative importance, flow easily from a linear model estimated using some form of least squares minimization (which is why the bayesm R package does not have functions for either one).

DeleteHi, I am not sure if you will see this, I tried to replicate ur code, but I am stuck at the very end when I wish to use the generated model to make prediction. Could you please provide some guidance on how to make predict in bayesm package? Thank you

ReplyDeleteOnce you have the coefficients, you return to the multinomial logit model and substitute these estimates into the equation. I did not cover any of this in my post. However, this material is presented for aggregate models in the book "R for Marketing Research and Analytics" by Chris Chapman and Elea McDonnell Feit. Here is a link to one of their slides http://r-marketing.r-forge.r-project.org/slides/chapter13-phillyR/ConjointR20150418.html#/15 (you will need to repeat this for each respondent when fitting a hierarchical model). Additional links can be found on the first slide of this presentation. Good luck.

DeleteThank you Joel for the post. I got thru the entire A3 code in Rossi's book, so I believe I do have a handle on hierarchical bayesian model in general. I am confused on several points. 1) You put the choice of brand A and B in the design matrix. In my view, you would have 4 dependent variables made up by brand-price combinations, and the (brandA,B,none) categories should be the regressant or results which represent the classification at the output. 2) based on the standard multinomial logistic regression approach given in wikipedia, there would be different sets of betas for each possible outcome. However, you listed only one set of betas, which seems to imply you only use one set of beta for all possible outcomes. 3) I plugged in the beta values and the design matrix numbers in excel, and the predicted results (largest numbers) do not at all agree with the Y values: [1] 2 2 1 3 1 3 1 3 2. If you could kindly enlighten me where did I go astray.

ReplyDeleteSo, there are three alternatives available in each choice set. You can pick only one of the following: Brand A, Brand B, or None of These. Toward the end of the post, I have listed the estimated coefficients used to calculate the utilities for each of these options. None of These gets a zero because of the coding. The Brand A utility is the sum of the appeal of Brand A and its price level. The same applies to Brand B.

DeleteAlthough every respondent has their unique estimates, let us use the average values as an example. Both Brand A and B have negative values, meaning that at their highest price point (because of the dummy coding) None of These is more likely to be selected. Brand B stays negative regardless of price (-1.99+1.39<0). Brand A, however, goes positive for both of its lower price points.

Remember that share is exp(Brand A+Brand A Price)/[1+exp(Brand A + Brand A Price)+exp(Brand B + Brand B Price)], where exp(0)=1 represents None of These.

ok. That's the problem. I am using the mean value for beta. Applying them to user 1 would not necessarily agree with the experimental results.

Delete